The AI SRE that teaches itself

RunLLM connects to your observability tools, code, and docs. When an alert fires, it works with you to find the root cause and deliver next steps — in Slack, in minutes.

Self-Teaching

Knows your stack, day one

When you connect any tool to RunLLM, it maps the entire schema — what dashboards exist, what metrics back them, and where in your codebase those metric streams originate. During an incident, this enables RunLLM to pinpoint the right data in the right system to accelerate RCA and remediation.

Automatic discovery

Maps dashboards, metrics, log schemas, and dependencies for every system. No manual configs.

Cross-system connections

Builds operational knowledge from your tools and data. Works with what you have, or don’t have.

Always current

Understands how systems depend on each other, so a spike in one traces back to a change in another.

No runbooks required

Builds operational knowledge from your tools and data. Works with what you have, or don’t have.

Collaborative investigation

Your investigation partner

RunLLM is built to work with you. Let it investigate autonomously or provide guidance at different points during the investigation. Your input improves investigations in real-time, teaching RunLLM what matters most for next time.

Glass Box Reasoning

Hypotheses are shared as they form, ranked by confidence, with the reasoning visible.

Better Together

Confirm, correct, or reprioritize. Point the investigation in the right direction before time is spent on the wrong path.

Rapid Learning

Corrections and prioritization provide key signals. Over time, RunLLM builds a deeper understanding of your environment: what’s connected, what breaks, and what to check first.

Anatomy of an investigation

Corrections and prioritization provide key signals. Over time, RunLLM builds a deeper understanding of your environment: what’s connected, what breaks, and what to check first.

.png)

Alert fires

PagerDuty sends an alert: elevated 5xx errors on checkout-service.

.png)

Context gathered

RunLLM examines the alert details: all errors are 500s, triggered after 15:19 UTC, and concentrated on a single endpoint. It pulls the relevant Grafana dashboard and checks for correlated signals.

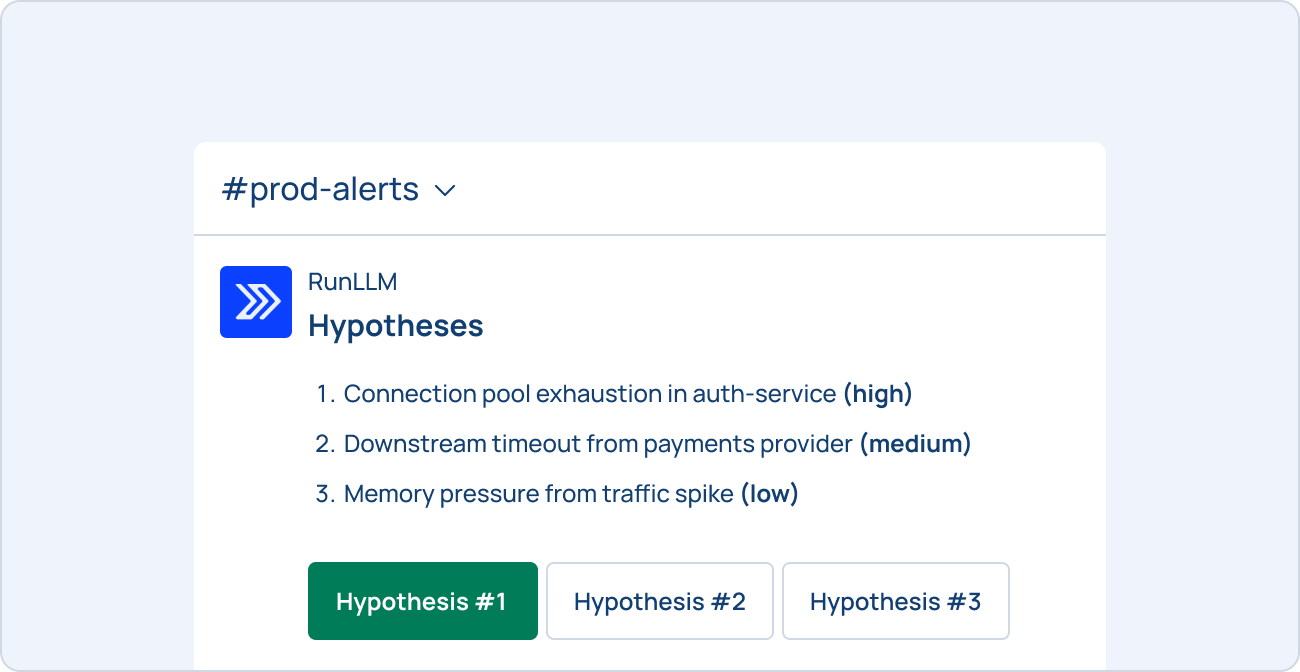

Hypotheses surfaced

Three hypotheses appear in Slack, ranked by confidence:

Engineer steers

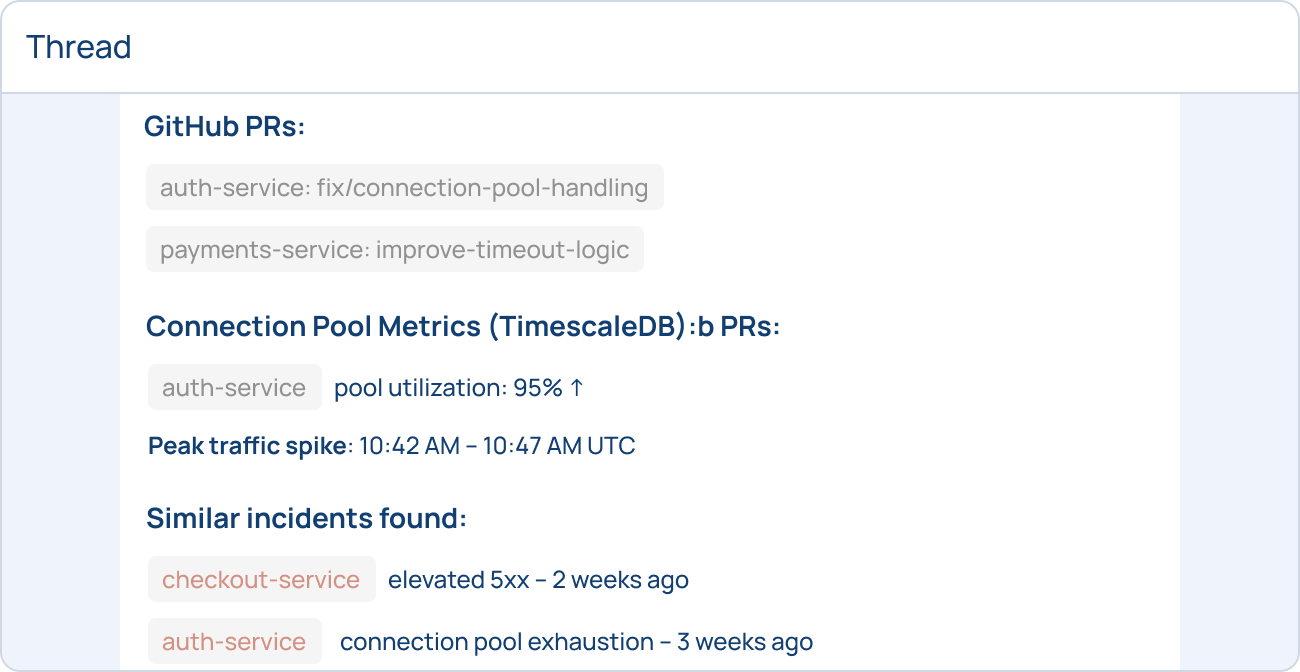

On-call confirms hypothesis #1 looks right. RunLLM also checks #2, just in case. Then it pulls config changes from the PR, checks connection pool metrics, and correlates with a similar incident three weeks ago.

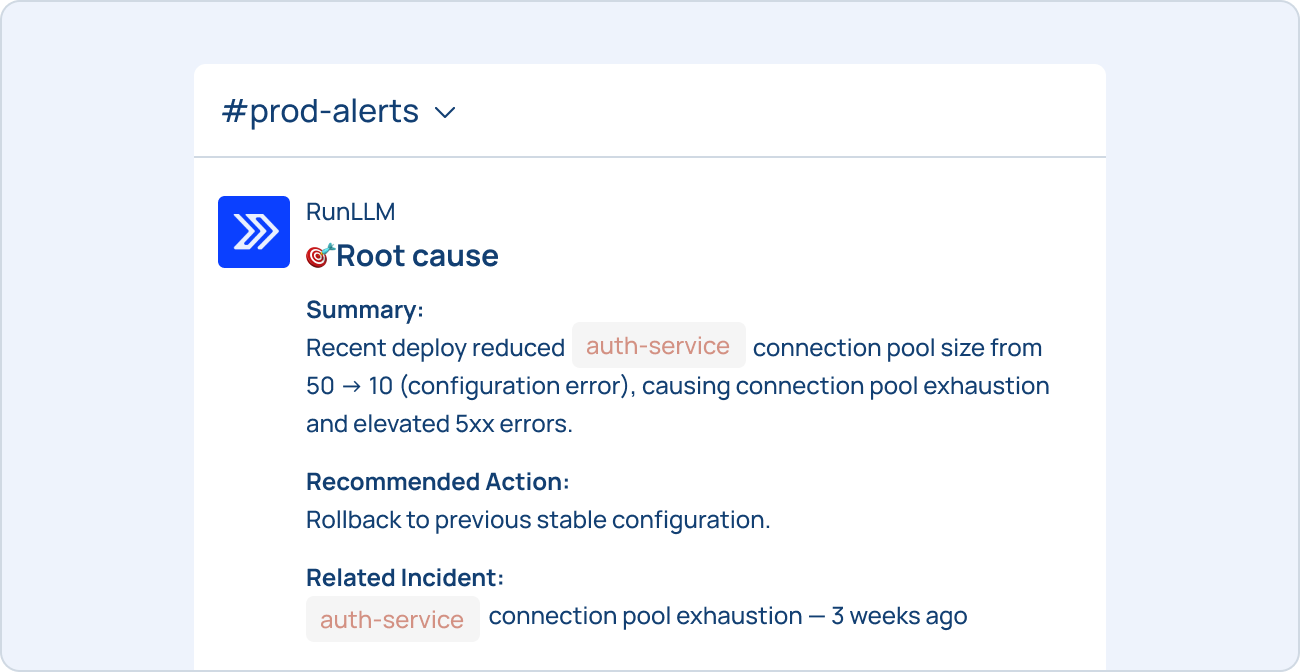

Root cause delivered

Root cause: the deploy reduced connection pool size from 50 to 10 (config error). RunLLM recommends rollback with the exact command and links to the previous related incident.

After resolution

RunLLM logs the investigation and captures what worked. The next time "check-service" alerts, RunLLM will check the connection pool config first.

Beyond firefighting

Most AI SRE tools automate what on-call engineers already do. RunLLM also does work humans can’t — processing massive volumes of data in parallel, continuously, without fatigue.

Predictive risk identification

Analyzes patterns across past incidents, deploys, and operational data to flag emerging risks before they cause further issues.

Intelligent alert filtering

When a system degrades, dozens of alerts fire across services. RunLLM groups them, identifies the underlying cause, and investigates once.

Anomaly detection at scale

Detects low-signal or silent problems in logs, metrics, or customer tickets that haven’t triggered an alert.

Enterprise-ready security

Built for production environments where security is non-negotiable.

SOC 2 Type II Certified

Independently audited controls for security, availability, and confidentiality.

Read-Only By Default

Investigates and recommends. Does not take action unless you explicitly grant permission and approve.

Your Data Stays Yours

Customer data is never used to train general models. Every deployment is isolated. Your data is never shared with other customers.

Scoped access

OAuth-based integrations use the minimum permissions your tools support. You control what RunLLM can see and do.

Audit logging

Every action is logged and traceable — what RunLLM queried, what it concluded, and what it recommended.

.webp)

%20(2).png)

.svg)